Bad leads rarely look bad in a dashboard. They sound wrong on the call, when the caller asks for a service you do not offer, has no budget, or is nowhere near your market.

A solid call recording QA framework turns those moments into usable data. In 2026, AI transcripts, auto summaries, and live prompts make review faster, but the real win is simple: better lead quality, better coaching, and clearer feedback between marketing and sales. For marketing leaders, sales ops, contact center managers, QA teams, and small business owners, that loop matters more than ever.

Why Your Team Needs a Call Recording QA Framework Now

When you review calls by source, weak patterns stop hiding. One landing page sends high-intent buyers. Another sends price shoppers. A paid keyword may look fine in reporting but keep attracting the wrong service request.

That matters because lead quality is not only a sales issue. In many small businesses, DIgital Marketing, SEO, Performance Marketing, Social Media Marketing, and Website Development all shape who calls and what they expect. A good QA program shows whether poor results come from bad traffic, a weak script, slow follow-up, or a broken handoff.

In 2026, many teams can search transcripts, tag objections, and compare outcomes within minutes. Some tools now flag missed qualification questions during the call, not days later. Start with proven call center QA best practices, then pair the findings with a tighter Google Ads campaign structure for qualified leads if paid search is a major source.

Core Components of an Effective Call Recording QA Framework

Most small businesses do not need a giant scorecard. They need a system that answers four questions: Was the lead a fit, did the agent handle the call well, was the next step clear, and did marketing attract the right person in the first place?

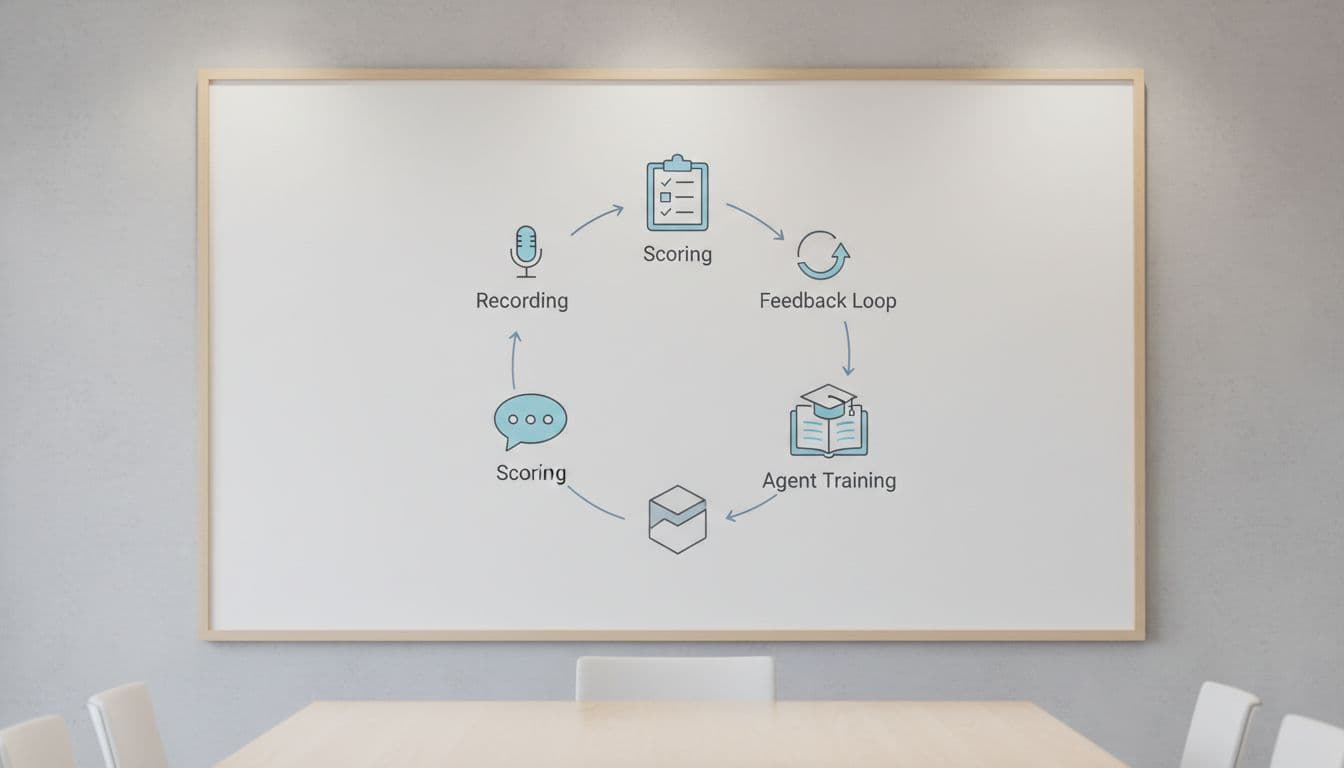

Build your framework around five parts:

- Record and tag calls by source, campaign, landing page, and agent.

- Score the same few behaviors on every reviewed call.

- Connect scores to booked meetings, qualified opportunities, and sales.

- Coach agents weekly with clips from real calls.

- Share themes with marketing and sales ops every month.

If marketing and sales use different rules for “qualified,” QA turns into opinion.

Also set rules for consent, storage, and access. That keeps the process safe and useful. A practical sales call recording guide covers the legal side. Then connect call outcomes to enhanced conversions for Google Ads leads so source quality is based on real outcomes, not guesswork.

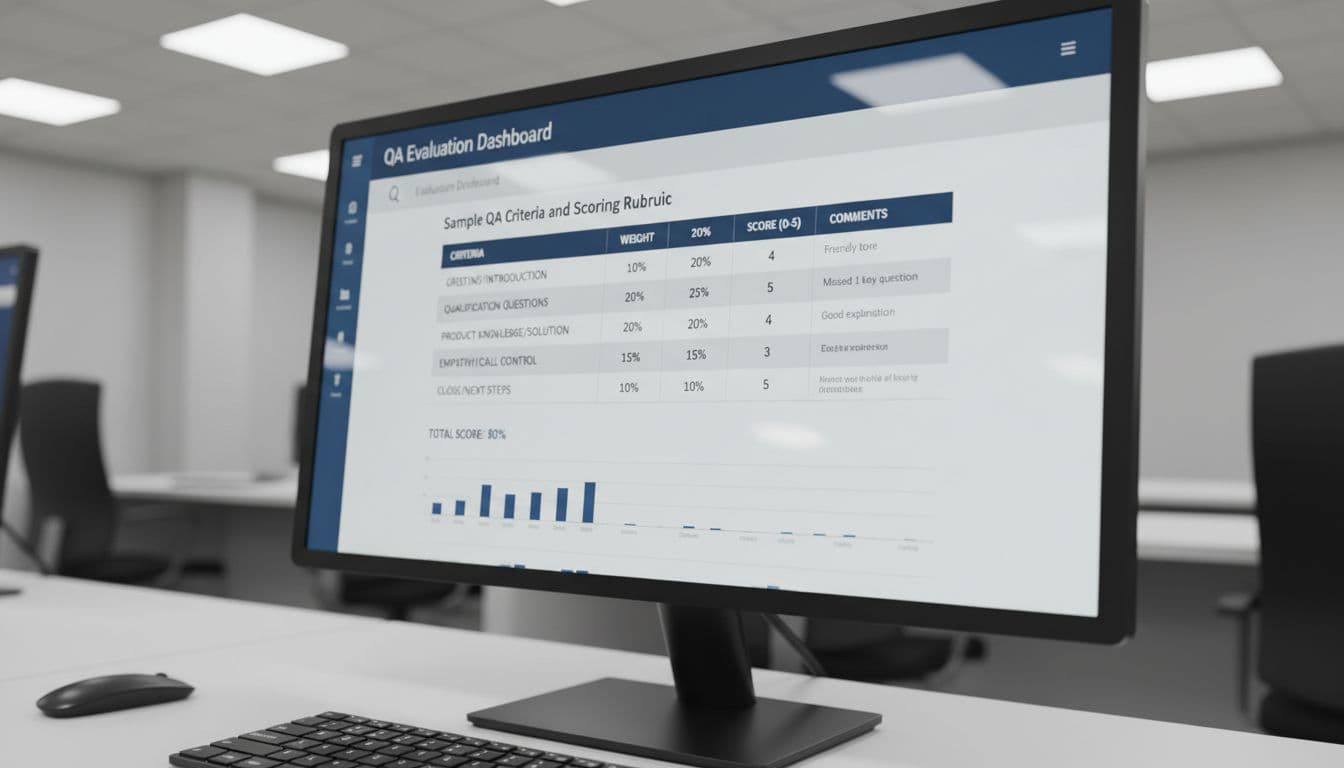

Sample QA Criteria and Scoring Rubric

Start with a short rubric. If you score too many things, reviewers drift and agents ignore the feedback. Keep the focus on lead quality, conversion readiness, and clean handoffs.

This simple model works well for service businesses:

| QA area | Weight | Full-score standard |

|---|---|---|

| Opening and trust | 10 | Clear greeting, sets purpose, confirms caller context |

| Qualification depth | 25 | Captures need, location, budget, timeline, decision role |

| Fit and urgency | 25 | Confirms service fit, urgency, and buying intent |

| Next-step control | 20 | Books appointment or sets a specific follow-up |

| CRM and compliance | 20 | Logs source, notes, consent, and outcome correctly |

Set score bands before rollout. A score of 85 to 100 means the call was sales-ready. A 70 to 84 call needs coaching. Anything under 70 needs manager review because the agent likely missed fit, urgency, or the close. Run twice-monthly calibration sessions, and use a simple call center QA checklist to keep scoring steady across reviewers.

Key KPIs to Measure Lead Quality Improvements

A QA score by itself is not a business metric. The real test is whether lead quality improves across sources, agents, and outcomes. This same view helps contact center managers spot training gaps and helps marketing leaders trim bad spend faster.

Track a small KPI set and review it every month:

- Qualified lead rate by source

- Appointment set rate after the first call

- Lead-to-opportunity rate by agent and campaign

- No-fit or wrong-service rate by landing page

- Average QA score and coaching completion

- Speed to follow-up on high-intent calls

The most useful view compares source quality with conversion readiness. If organic traffic grows but no-fit calls rise, review your pages with this lead-gen SEO audit checklist 2026. If paid volume rises while booked jobs stay flat, the issue may sit in targeting, landing-page promise, or agent handling. QA makes that visible fast.

Step-by-Step Implementation Guide for 2026

Implementation works best in phases, not a big launch. A small team can stand up a useful framework in 30 days if the scope stays tight.

- Write one shared lead definition. Include fit, service area, budget, urgency, and decision-maker status.

- Tag every recorded call by source, campaign, landing page, and agent.

- Review the first 100 calls and note where scorers disagree.

- Adjust the rubric until it matches real buying behavior, not script trivia.

- Coach from call clips, then track whether the same issue drops the next month.

- Report findings to both marketing and sales, then fix the source or the script.

In 2026, AI can cover far more than random spot checks. Still, human review matters because tone and context change meaning. If volume is rising, learn how to audit sales calls at scale so managers spend time coaching instead of hunting through recordings.

Common Pitfalls and How to Avoid Them

Most QA programs fail in ordinary ways. Teams grade script use harder than lead fit. They review only star reps or weak reps, so they miss the middle. Managers coach agents but never tell marketing that a keyword, ad, or form is attracting junk. Some teams also keep call files with loose access rules, which creates risk.

The fix is simple. Score for business outcomes first. Sample calls across all agents and sources. Hold one monthly meeting where marketing, sales, and QA listen to the same themes. That is where Website Development gaps, ad-message mismatch, and poor routing start to show up. Recent 2026 tools can flag missed questions in real time, but teams still need calibration so everyone judges calls the same way.

A bad lead stops looking mysterious once you can hear the pattern. A strong call recording QA framework turns that pattern into cleaner campaigns, sharper agents, and more sales-ready conversations.

If your call data, ad data, and CRM still live in separate places, Get In Touch With Us and build a process that improves lead quality without adding busywork.